Key Takeaways

- 1CBO campaigns starve new creatives by optimizing for cheap, early clicks

- 2Use ABO isolation to force fair spend on every single creative asset

- 3Adopt the 'One Creative, One Ad Set' rule for statistically significant data

- 4Apply a strict 48-hour rule to cut losers and identify true winners

- 5Automate the testing workflow to eliminate manual setup errors

Most advertisers use the "Spaghetti Method," letting Facebook's algorithm starve potential winners. Discover why CBO fails at testing and how the Scientific ABO Protocol forces fair spend to uncover your actual top-performing ads.

I want you to open your Ads Manager right now.

Go to your "Creative Testing" campaign. (You do have a separate campaign for testing, right? If not, we have a bigger problem, but let’s assume you do).

Click on the Ad Set level. Then click on the Ads.

Look at the "Amount Spent" column.

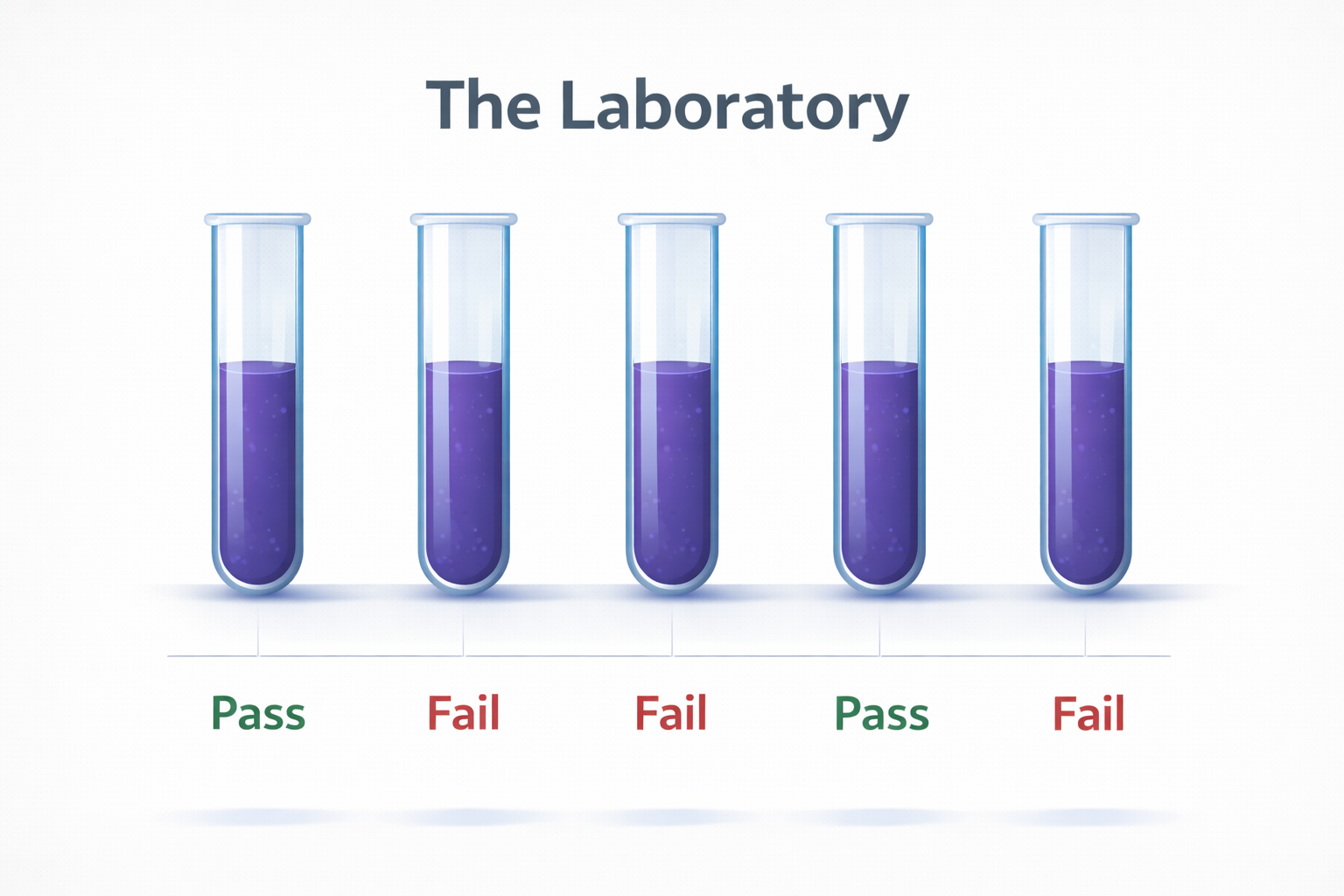

I am willing to bet money that it looks something like this:

Ad A: $42.50

Ad B: $3.10

Ad C: $0.45

Ad D: $0.00

Ad E: $0.00

You launched 5 new creatives. You wanted to know which one was the best.

But Facebook spent 95% of your budget on Ad A. Why? Because Ad A got a cheap click in the first hour. Or maybe someone commented on it. The algorithm grabbed onto that early signal and said, "This is the one!"

So, Ad A gets all the spend. It gets a few sales. You think, "Great, Ad A is a winner!"

But wait.

What about Ad B? What about Ad C? What about the ones that got zero spend?

You didn't test them. You starved them.

This is what we call the "Spaghetti Method." You throw a bunch of stuff at the wall (CBO) and see what sticks. It feels like testing, but it’s actually gambling.

And it is burning 60% of your budget on false positives and missed opportunities.

The CBO Lie: Why Campaign Budget Optimization Fails at Testing

Facebook (Meta) pushes CBO (Campaign Budget Optimization) hard. They tell you, "Trust the algorithm! We know best!"

And for scaling, they are absolutely right. CBO is a beast for finding the most efficient conversion in a large pool of proven assets.

But for testing? CBO is a disaster.

The algorithm’s job in a CBO campaign is to get the cheapest result today. It is short-sighted. It optimizes for the lowest hanging fruit.

Creative testing requires the opposite approach. Testing requires fairness. It requires isolation. It requires giving every single variable an equal chance to prove itself.

If you put a Usain Bolt in a race against a toddler, the algorithm (CBO) will bet on Bolt every time. But what if you’re trying to find out if the toddler is a math genius? The race (CBO) is the wrong test.

When you use CBO for creative testing, you introduce Variable Bias.

Ad A might have a lower CTR but a higher Conversion Rate (CVR). It’s a "slow burn" creative that attracts high-value customers. But because Ad B got more clicks early on, CBO starved Ad A before it could get a purchase.

You just killed your most profitable ad because the algorithm was impatient.

The Scientific Solution: ABO Isolation

So, how do we fix this? How do we stop burning money on biased tests?

We stop acting like gamblers and start acting like scientists.

We use the Crush Scientific Testing Protocol.

The core of this protocol is simple but non-negotiable: One Creative, One Ad Set, One Budget.

This is ABO (Ad Set Budget Optimization) in its purest form.

Instead of dumping 5 ads into one ad set, we create 5 separate ad sets. Each ad set contains exactly one ad.

Why do we use ABO for testing?

Forced Spend: We force Facebook to spend money on every single creative. Ad C cannot hide. It must perform.

Statistical Significance: We ensure that every ad gets enough impressions to generate valid data.

Clean Data: When we look at the results, we know exactly why an ad failed. It didn't fail because it was starved; it failed because it sucked.

Why Automation is Essential for Scale

Now, I know what you’re thinking.

"But creating 5 ad sets for every test? That takes forever! I have to duplicate, rename, change the ad, set the budget..."

You are right. Doing this manually is a nightmare. It’s tedious, prone to errors, and impossible to scale. If you test 20 creatives a week, you’re setting up 100 ad sets manually. You will go insane.

This is why most people revert to the Spaghetti Method. It’s easier.

This is why we built Crush.

Crush automates the entire Scientific Testing Protocol. You don't have to manually create ad sets. You don't have to worry about naming conventions.

Here is the Crush workflow:

You upload your 5 (or 50) new creatives.

You select the "Scientific Test" template.

You click "Launch."

That’s it.

In the background, Crush automatically:

Creates a new Campaign.

Creates a dedicated Ad Set for each creative (ABO).

Sets the budget (e.g., 1x your target CPA).

Applies the correct naming conventions.

Publishes everything to Facebook.

But it doesn't stop there.

Automated Decision Making: The "Judge" Algorithm

Launching is only half the battle. The other half is the decision-making.

In a manual world, you have to check these ad sets every day. You have to decide when to turn them off.

"This one spent $15 and has no clicks... should I kill it?"

"This one got a sale but it cost $80... maybe it was a fluke?"

Crush removes this emotional labor. We apply a strict 48-Hour Rule.

Once the ads are live, the Crush "Judge" algorithm monitors them. It ignores the noise. It looks for signal.

If an ad set hits the kill condition (e.g., Spend > Target CPA with 0 Sales), Crush kills it instantly. No mercy. No "maybe."

If an ad set hits the win condition (e.g., ROAS > 2.0), Crush flags it as a "Winner" and prepares it for the Scaling Campaign.

The Result: Unshakeable Confidence in Your Winners

When you switch to this method, something amazing happens.

You stop guessing.

You know, with 100% certainty, that your winners are actual winners. They aren't just ads that got lucky in a CBO pool. They are gladiators that fought in the arena alone and survived.

This means when you move them to your Scaling Campaign, they hold up. They handle higher budgets. They stabilize your account.

You stop burning 60% of your budget on bad tests. You channel that money into finding more winners.

The math is simple. The logic is undeniable. But the execution is hard—unless you have Crush.

Stop throwing spaghetti. Start running a lab.

Start testing with Crush today.

Frequently Asked Questions

Common questions about this topic

1Why is CBO bad for creative testing?

2What is the Scientific Testing Protocol for Facebook Ads?

3How long should I test a Facebook ad creative?

Written by

Albertas Pocius

Co-founder, CMOAlbertas is the Co-Founder and CMO of TryCrush.ai, bringing elite-level performance marketing expertise to the platform. With over $50M+ in combined Facebook and TikTok ad spend, Albertas has launched and scaled dozens of projects from zero, driven by a deep obsession with data and experimentation. He has trained 20+ media buyers, consulted 100+ companies, and is widely recognized as one of Europe’s early TikTok pioneers—likely the first to scale campaigns beyond $100K per day. Today, he also teaches advertising strategy at two colleges, shaping the next generation of media buyers.

Stop Guessing.

Start Crushing.

AI Ad Manager trained by world-class media buyers to generate your creatives and optimize your ads 24/7.

Learn more

Continue Reading

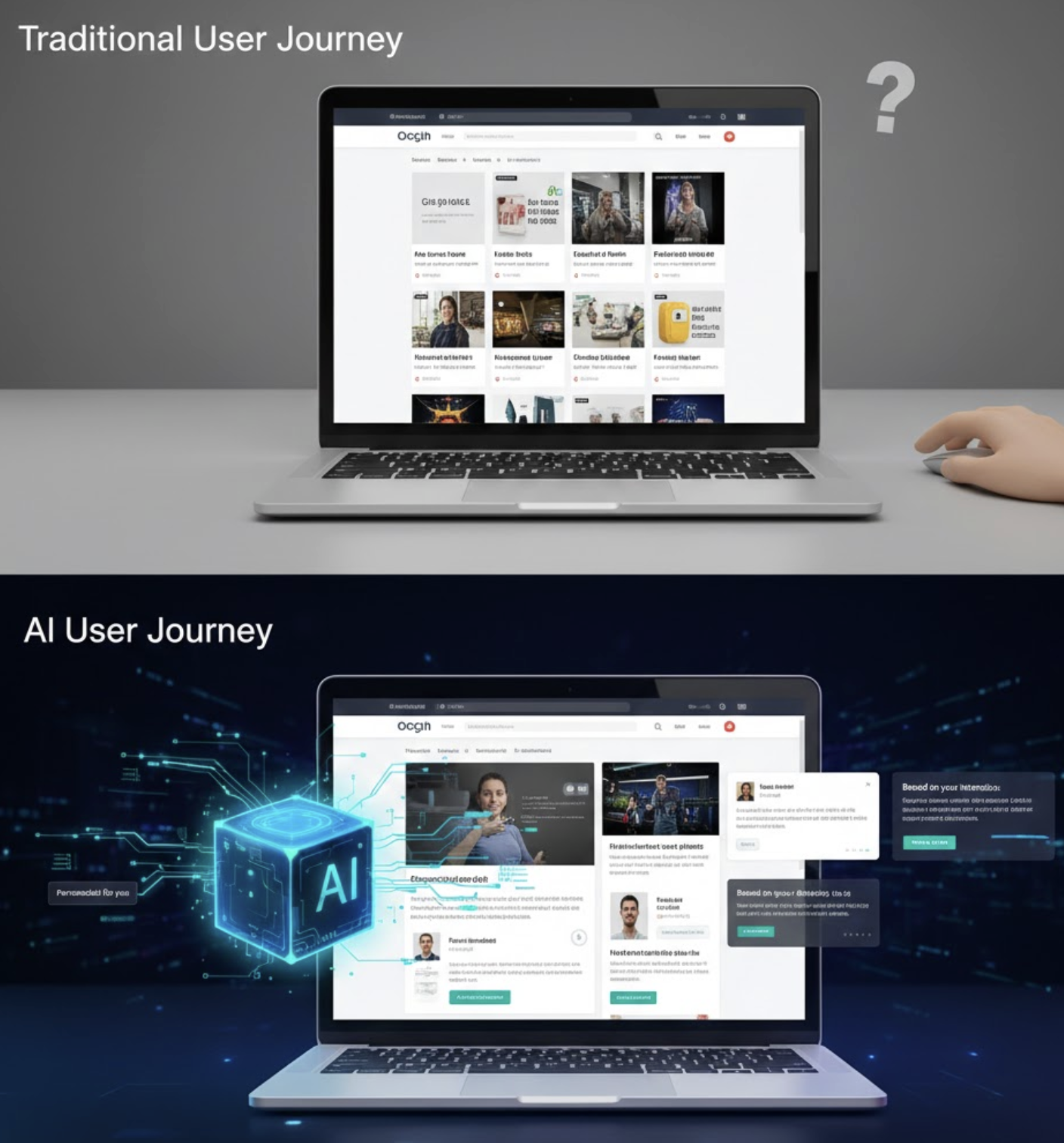

What is Media Buying in 2026? The AI Revolution

The digital revolution changed advertising forever, but AI is completely rewriting the rules. Learn what media buying is today, why manual bidding is dead, and how modern marketers use creative strategy and AI algorithms to scale profitably.

What is Conversion Rate? How to Optimize It With AI

Understanding your conversion rate is the key to multiplying your business revenue without increasing ad spend. Move past slow, manual A/B testing and discover how AI-driven hyper-personalization is revolutionizing conversion rate optimization.

What is CAC? How AI Can Cut Your Acquisition Costs in Half

Customer Acquisition Cost (CAC) is the ultimate metric for business survival. Learn the true CAC meaning, the golden LTV:CAC ratio, and how AI-driven creative testing can systematically cut your acquisition costs in half.